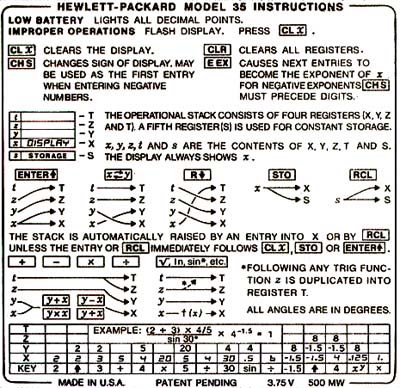

The following specifications have been derived from the instructions printed on the back of the calculator. (Image from http://www.hpmuseum.org/hp35.htm.)

Low battery lights all decimal points.

| eg.Calculator | ||

| volts | watts() | points() |

| 3.75 | .500 | false |

| 3.60 | .500 | false |

| 3.45 | .500 | false |

| 3.30 | .500 | true expected false actual |

Improper operations flash display. Press clx.

| eg.Calculator | ||

| key | x() | flash() |

| 100 | 100 | false |

| enter | 100 | false |

| 0 | 0 | false |

| / | 0 expected Infinity actual | true expected false actual |

| clx | 0 | false |

clx clears the display.

| eg.Calculator | ||||

| key | x() | y() | z() | t() |

| 100 | 100 | 0.0 | 100.0 | 0.0 |

| enter | 100 | 100 | 0.0 | 100.0 |

| enter | 100 | 100 | 100 | 0.0 |

| enter | 100 | 100 | 100 | 100 |

| clx | 0 | 100 | 100 | 100 |

clr clears all registers.

| eg.Calculator | ||||

| key | x() | y() | z() | t() |

| 100 | 100 | 0.0 | 100.0 | 100.0 |

| enter | 100 | 100 | 0.0 | 100.0 |

| enter | 100 | 100 | 100 | 0.0 |

| enter | 100 | 100 | 100 | 100 |

| clr | 0 | 0 | 0 | 0 |

chs changes sign of display. May be used as the first entry when entering negative numbers.

| eg.Calculator | ||

| key | x() | y() |

| 100 | 100 | 0 |

| chs | -100 | 0 |

| chs | 100 | 0 |

| enter | 100 | 100 |

| chs | -0 expected -100.0 actual | 100 |

| 100 | -100 expected 100.0 actual | 100 expected -100.0 actual |

eex causes next entries to become the exponent of x. For negative expoinents chs must precede digits.

The operational stack consistes of four registers (x, y, z and t). A fifth register (s) is used for constant storage.

The stack is automatically raised by an entry into x or by rcl unless the entry or rcl immediately follows clx, sto or enter.

Follwing any trig function z is duplicated into register t.

All angles are in degrees.

Example (2+3) * (4/5) / sin(30) * (4^-1.5) = 1.0000

| eg.Calculator | ||

| key | x() | y() |

| 2 | 2 | 100.0 |

| enter | 2 | 2 |

| 3 | 3 | 2 |

| + | 5 | 2.0 |

| 4 | 4 | 5 |

| * | 20 | 2.0 |

| 5 | 5 | 20 |

| / | 4 | 2.0 |

| 30 | 30 | 4 |

| sin | .5 | 4 |

| / | 6 expected 8.000000000000002 actual | 2.0 |

| -1.5 | -1.5 | 8 |

| enter | -1.5 | -1.5 |

| 4 | 4 | -1.5 |

| x^y | .125 | 8 expected -1.5 actual |

| * | 1.0000 expected -0.18750000000000006 actual | 8.000000000000002 |

| fit.Summary | |

| counts | 75 right, 9 wrong, 0 ignored, 0 exceptions |

| input file | /Users/ward/Documents/Java/fit/Release/Documents/CalculatorExample.html |

| input update | Mon Sep 15 16:47:24 PDT 2003 |

| output file | /Users/ward/Documents/Java/fit/Release/Reports/CalculatorExample.html |

| run date | Mon Sep 15 16:51:18 PDT 2003 |

| run elapsed time | 0:00.32 |

You can run this document as it stands right now against a calculator implemented at c2.com using the RunScript below. You will find that that the tests, the fixture and the calculator code are all not yet complete. Failing tests turn a cell red. There are two values in the cell. The top one is the expected result. The bottom is the actual result.

- http:run.cgi -- invoke on c2.com and view results here

- http:hp35.cgi -- model constrained generated test data

- http:Release/Source/eg/Calculator.java -- the HP35 calculator and its fixture

I'd like to see a manual interface to this calculator. It would provide keys and a display of the x value, just like a regular calculator. Doing this would point out some interesting differences between the programming and manual interfaces. One issue i'm curious about would be that keys would be digits, not complete numbers. So some mechanism would need to be created to convert. A manual interface would help to illustrate the concept of how you can test by going under the GUI. This is a hard concept for testers to understand.

I have some comments on the perl hp35 simulator. I'm realizing that they'd be easier to make if i could cite tests. But that is hard, because they are random. Thus my first suggestion: Random tests should log the seed they used to generate them. And the script should be able to take a seed as an argument to regenerate the same test. This is a general rule for random tests, that i didn't get around to including in LessonsLearned. And, this would allow me to create a URL to a specific instance of a randomly generated test -- which would help me make my next suggestion.

Second suggestion. Many of the tests using the hp35 simulator fail because of differences in precision. I would think that you'd want your fixture to be able to accept differences beyond a certain precision. Perhaps by setting a tolerance or something. Any tester who reported a bug simply because the implementation was calculating to a different level of precision than the oracle would be dismissed as wasting people's time. The fixtures need to have more sensitivity here. -- Oh i see i see that you (claim to) have addressed this in ScientificPrecision. Then why am i still seeing these problems?

Hey. I'm seeing something strange with the calculator model. I generate a test (more) and then run it (run). And the test that is run is NOT THE SAME as the test that i just generated. This might be easier to track down if i could specify seeds.

-- BretPettichord